Why data storage pricing does not mean anything anymore

Performance underneath: 1GB of data with 0.25 IOPS/GB or with 25 IOPS/GB?

We have looked into this already but from a different angle, so it’s worth pointing out that systems have a different level of functionality and you should adjust for this. Here is an example for you, examining the result of the storage systems used on the actual service you deliver in public cloud environments:

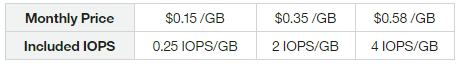

Softlayer:

Source: http://www.softlayer.com/block-storage, as of April 2016

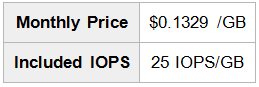

CloudSigma*:

*CloudSigma is a StorPool customer and we have the metrics of their systems. The quoted $/IOPS were measured at their Perth, Australia facility.

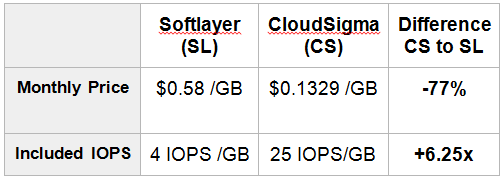

As you can see CloudSigma is 4+ times less expensive than Softlayer while delivering 6.25 times more IOPS/GB on the storage side. This is a whopping 25 times price/performance difference. And to connect the dots for you: Softlayer runs on traditional storage arrays, CloudSigma is in the SDS stack, everything else being pretty much similar:

Side by side comparison: Softlayer vs CloudSigma:

Explore more: Performance Tests

How to reduce the data footprint of your storage system … and render it totally unusable?

Another point to consider is that choosing a storage system is about trade-offs. $1,000 / TB usable in an erasure coded system and the same capacity in a replicated system, will behave very differently in different use cases. This is because erasure coding uses a lot of the CPU power and RAM to process the data, as does deduplication. Even if these features are available in the software package, enabling them will reduce space requirements, but also reduce performance, so your servers will not be able to run as much computation (if at all). Both of these things are OK, depending on your requirements, but it is important to highlight that a clear trade-off exists. This is one of the key reasons why we do not do and do not recommend deduplication and erasure coding for high-performance primary systems, especially if they run on the hypervisors, (converged set up, the storage function running as software on the same compute nodes).

Commodity versus specialized Hardware

In many cases, storage hardware vendors claim that their offerings are superior to software-only solutions because they come in a “special box”. However, no matter how much emphasis they put on specialized hardware, in most cases it is simply not true that a solution coming in a storage box will out-perform a good SDS implementation, running on standard hardware. If you open a typical storage array you will see it is a standard server – chassis, motherboard, CPU, RAM, network and drives and yes, apparently “it is run by software” and oh, yes, they have a fancy sticker on top and charge 5 to 10 times the retail price of the same hardware. In other words, there is nothing special inside these storage boxes, the magic is in the software and that is where the functionality is delivered from.

Open-source (OSS) or not

There is a common misconception that open-source = free. However this is not the point of OSS and nothing in life is free, you always pay in one way or another. An open-source project is usually DIYand customers have to spend the time, effort and therefore cash to make it work. Proprietary solutions usually come “out of the box”, meaning you pay and someone sets it up for you. So there is a trade-off between time and money. You either spend time and maybe save money, or you spend money and save time.

Over time IT infrastructure is becoming increasingly OSS based, with more viable OSS alternatives, to proprietary solutions, appearing, just think of SQL and mySQL, Windows and Linux, and VMware and KVM for example. However it is usually a commercial vendor who spearheads a technology or product, and over time OSS catches up. Apart from some “religious” concerns as to which solutions are better, in most cases it simply boils down to a discussion about price and functionality. To keep within the scope of this post we will look into technology and data storage pricing.

In our experience both proprietary and open-source solutions are OK, as long as they:

- do the job;

- match the bill.

Looking into the new SDS domain the technology advantage is still on the side of the proprietary vendors. This has a direct impact on the business case, a paid proprietary solution may have a total solution cost which is two times lower than the cost of an OSS alternative. For example, one of the popular open-source storage software solutions needs storage servers that cost approximately $10k /server and uses them for storage only, whereas StorPool for example, only needs servers that cost approximately $2k/server. That provides a saving on hardware costs alone of 5 times+, versus the popular open-source software alternative, so even if you do not pay for commercial support of the OSS product, you are still better off with the commercial product, when you calculate the actual TSC/TCO.

This shows again that you always pay in one way or another, so make an educated choice on a price/performance and price/functionality basis, ensuring that you are considering the total solution cost.

The magic is in the software (and it always has been)

Understanding and realizing the previous point is crucial. Even now the more expensive part of a storage solution, is the software, however as most solutions come as a bundle, customers do not see this, because the price split is obscured and hidden in the overall bundle cost.

Therefore it should be of no surprise that in the case of SDS, the price of the software is usually higher than the price of the hardware in a given project. This is to be expected and is certainly not a problem, as long as the TSC and TCO are better when they are compared to the TSC and TCO of alternative solutions, with matching parameters and features/functionality.

This is Part 3, the final article of a series of articles on data storage pricing. You can find Part 1 and Part 2 below. Feel free to share your views in the comments, forward the article to a friend or get in touch with us at info@storpool.slm.dev

Go to “A practical guide on storage pricing in the 21-st century: PART 1”

Go to “A practical guide on storage pricing in the 21-st century: PART 2”

Share this Post