Why storage pricing does not mean anything anymore

OEM vs “branded” media

We have discussed this topic before in our post “5 Things Storage Vendors Are Not Telling You”, however it is fully valid here. In traditional systems your actual price is not the price of the system you initially buy, you should also consider the price of the upgrade drives and system expansions that you will have to buy further down the line, once the vendor has locked you in. A standard drive that costs $500 from a major manufacturer/distributor is sold by traditional storage vendors, (both SAN and all-flash/ssd) at a price premium of between 4 to 10 times that charged by the maker. Again, the overwhelming majority of big storage vendors do not produce their own HDDs/SSDs, they source them directly from the HDD and SSD vendors – Samsung, Micron, Intel, WD, HGST, Seagate, etc. Regardless of what the sticker on top of the drive says (and the re-programmed, locked firmware) the drives in your system are most likely coming from one of the companies listed above, and not from a two or three-letter storage vendor.

This is just one of the reasons why it is good to use software-defined storage solutions – not only are they several times less expensive, but they also reduce vendor lock-in. Using standard drives has another benefit as drives from traditional storage array vendors often come to market with some delay whilst they develop their own custom, locked firmware and also do their best to squeeze extra dollars from already locked in customers. By using standard drives which are indeed made by the same manufacturers, you are also free to use the latest generation of devices, as soon as they are launched and available, which are usually better, faster and less expensive in terms of $ per GB/TB.

Think of the whole picture: TSC and TCO

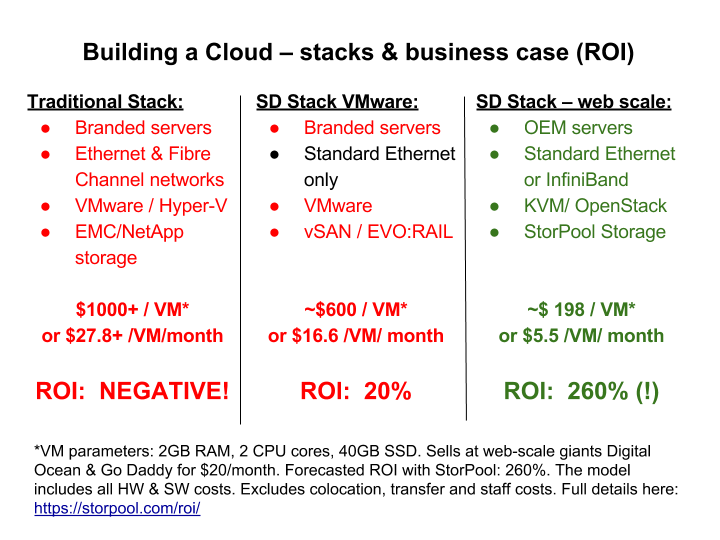

Below is an executive summary of the TSC and TCO: You can no longer compare storage systems directly, you need to compare the Total Solution Cost, (TSC) and the Total Cost of Ownership, (TCO), as the example below shows.

Building a software-defined infrastructure or datacenter (SDDC) is about a shift in your thinking and your approach to infrastructure. Software-defined storage is not about storage anymore, it is about merging compute, networking and applications. Even if your solution is not hyper-converged, the different pieces are tightly coupled. By adopting an SDS solution you are not only selecting a storage system, but you are also removing expensive and complex FC, (Fibre channel) networks, re-designing the compute part of your Cloud and changing the density and physical footprint of the solution. As a result, you change utility expenses, labor costs and change your entire TCO.

We see this point being overlooked the most, so let us make it very clear, two systems which have the same price per TB usable may have totally different Total Solution Costs, which differ many times over. The advantage here is on the side of software-defined infrastructure (SDS) and is, in fact, one of the fundamental drivers, as to why the software-defined infrastructure set of technologies came into existence and are being adopted.

Beyond the IO: software-defined infrastructure ratio metrics

“This system does 144,000 IOPS”. OK, but is this IOPS read, write, r/w (read/write)? What is the ratio of your use case, is it 30/40 r/w or is it 90/10 r/w? What is the size of your IO, 4 bytes, 4KB or 4 MB? And to “simplify things”, is it a parallel IO, or is it coming from one single user of the storage system?

The answers to these questions will have a significant impact on your actual price. Therefore it is better to use ratio metrics when comparing alternatives, $/(TB TSC/TCO), $/IOPS, IOPS/TB, etc. The better you describe your use case, the better you will navigate through the purchasing process and the better your overall solution will be. Some vendors will go the extra mile and tailor/help to provide a good match to your use case or be upfront if they do not think they are a good fit. However, others will offer you a generic solution and then spend countless hours and dollars to convince you that “pigs indeed can fly”.

Know your case

If you have not got the message yet, pricing and comparing storage systems is not a straightforward exercise anymore. However, the better you know your needs and use case, the better off you will be. We have discussed the matter previously in our blog post “Five things to ask before you choose your new storage system”. It starts with measuring what you have now and planning what you need in future. A common case we see is a company saying “I need all-SSD performance”. It is like saying “I need a bigger shirt”. When we measure, we often see customer’s current systems peak at about 10k to 20k IOPS and this is not the average load, it is the peak! In this example, more performance can be delivered even by an HDD only distributed storage solution!

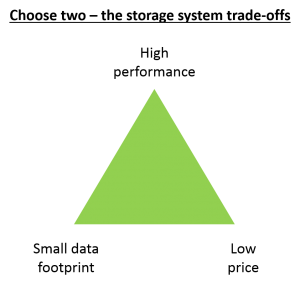

There is obviously a trade-off between cost and capacity and cost and performance. However knowing where you stand and where you want to go, will help you in two ways. 1) to know what you are looking for, which will save time; 2) to normalize the offers of vendors to match your use case and secure the best deal.

Thus we recommend using tools such as iostat, blktrace, sysbench and fio (which we like the best) to measure your current workloads and performance. This will help evaluate whether there are any bottlenecks in your current system and will give you a better idea of what you actually need.

What you actually need

In relation to the point above, in many cases, vendors try to sell you what you simply do not need, be it the most expensive package they have or a bundle of features, most of which you will never use. As in general shopping, the biggest discount you can get is by not buying something that you do not actually need. We see this daily with all-flash arrays which are becoming hype to a certain extent. They are good, and they have excellent performance, however, they come at a high cost and are still an additional layer of specialized hardware that you have to manage.

However in most environments “all-flash” level of performance is in practice not needed or where it is indeed, it can be delivered by a distributed storage solution at a lower cost, while also reducing complexity and vendor lock-in. It is just that customers are not aware of what is the solution that best fits. They try less expensive “hybrid arrays” which are generally a good fit, except they…cache and cache mean inconsistent performance. If you hit the cache you achieve all-flash performance, but if you do not hit the cache, (you have lots of random read workload), then you get HDD performance and it is not predictable.

What most customers really need today is a solution that does “Hybrid SSD”, i.e. has an all-flash performance at close to HDD pricing, meaning a product which falls in between the two. This is a solution that will be the best fit for most use cases for the next 3 to 5 years when eventually all media will be some type of flash. As we pointed out in our post “Why All-Flash Arrays Have No Long-Term Future” the beauty of software-defined infrastructure is that you become “hardware free” as all your data lives in the software layer and you no longer tie it to a particular storage box. You become free to add more space as needed and renew the underlying hardware as you wish, without having to think about complex hardware procurement procedures, migrations, and potential downtime.

This is Part 2 of a series of articles on storage pricing. You can find Part 1 and Part 3 below. Meanwhile feel free to share your views in the comments, forward the article to a friend or get in touch with us at info@storpool.slm.dev

Go to “A practical guide on storage pricing in the 21-st century: PART 1”

Go to “A practical guide on storage pricing in the 21-st century: PART 3”

Share this Post