We are all aware of the mind-numbing rate of data being generated today. This exponential growth rate of storage is being fueled by the digital transformation taking place on a global basis. Innovation today is originated from data, reinforced by data and delivered by data. In addition to this insatiable appetite for information, virtualization has also transformed the manner in which data storage is designed as server and computer endpoint deployments are being reduced to minutes or seconds.

And it is now the storage component that is the toughest to get right and takes the longest to provision. Especially when you have to manipulate storage devices, local to each and every server. You need to configure RAID, do capacity planning, engineer around backup and disaster recovery (DR) – and all this on a server per server basis. Which is why the era of “local” or “Directly Attached Storage” (DAS) is over. Although hardly a new technology, shared storage is now the data model that today’s data storage solutions are (or should be) based on.

What is Shared Storage?

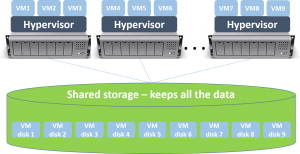

Shared storage is a single storage resource pool that is shared by multiple computer/server resources. It allows servers to save data and files on a shared storage system, designed to be independent of each server or computer. It is also designed to be much faster, more reliable and easier to scale. This basic concept has been utilized for years in order to save space and network bandwidth. Shared Storage technology simplifies the processes of accessing, migrating and archiving data. It is essential for achieving high-availability (HA) and to a large extent enables efficient “disaster recovery”, “continuous data protection” (CDP) and “business continuity” storage features.

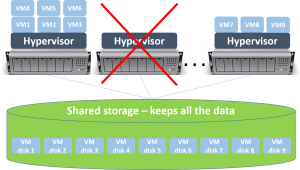

So what has shared storage? Shared storage is “centralizing” data in one “place” however it is more than just that. In today’s business environment, it is imperative that data be accessible on a 24/7 basis and not be objected to hardware issues. So for example, if a physical server fails, when the shared storage pool is available, you can power-up VMs and workloads from the failed server to a different host. In this way, the VMs/workloads will continue running, without any data loss, since their data was saved on the shared storage system, not on the local drives of the failed server.

So why is shared storage so important?

There are many reasons why shared storage is critical. Here are the main benefits it delivers:

- Increased Reliability and Higher Availability – using shared storage software minimizes downtime since data is independent of the compute resources. The degradation of a server component doesn’t impact the data itself. Additionally, HA can be achieved by migrating (or powering-up VMs from a failed) physical server to another server, with the VM accessing its latest data, as it was stored on the shared storage space (as visualized above).

- Higher Performance Levels – shared storage systems are designed to meet even the most rigorous storage demands by offering ultra-fast I/O rates and low latency, increasingly with all-SSD levels of performance

- Better scalability – This is an essential attribute of nearly any aspect of today’s data center and is especially important for data storage. A good shared storage solution scales linearly, without causing downtime or disruption to applications using it, while it scales.

- Central Management and Simplicity – one pool of storage to manage, which is operationally easier, compared to managing storage server by server. Performance and size can be easily provisioned and exposed to the application or server which needs them.

- Advanced Data Features – shared storage systems are designed as specialized storage solutions. Their task is to store data in a reliable and effective manner, and thus come with many data storage technologies which enable just this. Features such as thin provisioning, snapshots & clones, TRIM/DISCARD, zeroes detection, tiering, erasure coding, deduplication, compression – to name just a few. These features ultimately mean better storage system and lower total solution cost.

Shared Storage Options

Companies can choose from a number of shared storage solutions, depending on the particular business need and use case. They do not necessarily come in the form of a “storage array” (as is the case with storage software and distributed storage solutions). Here are the most commonly used options.

1. Network Attached Storage

NAS advantages:

– NAS is a simple

– An inexpensive way to share files amongst multiple users throughout the enterprise

– A typical NAS is easy to manage and can be deployed quickly

NAS limitations:

– It is pretty much limited to file storage

– It cannot handle the load of transaction-intensive databases or other demanding workloads

2. Storage Area Network

SAN advantages:

– The SAN is a very robust device

– It is designed to withstand a sizable degree of hardware failure by integrating great redundancy into the hardware design

– SAN is designed to provide lightning-fast storage to critical and demanding applications

SAN drawbacks:

– It’s very high CapEx cannot be substantiated by less critical data

– SAN management requires training or at the least a defined learning curve

– Proprietary systems make data migration difficult

– Increased opportunity costs due to vendor lock-in

– Upgrades are very time to consume and require a high degree of planning and preparation

– Usually very hard to scale after a certain size

– Intrusive and risky hardware generation changes

3. Software Defined Storage

If done right, Software defined storage delivers the best of both worlds. It combines the redundancy, high availability, and performance of the traditional SAN with the fluidness and agility of Software. SDS offers key advantages such as:

– Significant cost savings – Rather than utilizing expensive proprietary disk arrays, software-defined shared storage utilizes standard x86 components

– Lower Support Costs – Since SDS systems are made up of standard servers and drives, replacement costs are lower and expensive vendor support costs are no longer mandatory

– Increased reliability levels – unlike storage arrays, which are designed to make single components very reliable and lose the battle with The Law of Diminishing Returns, SDS solutions use a multitude of standard and interchangeable components to deliver higher levels of reliability

– Reducing vendor lock-in – The underlying hardware of SDS isn’t proprietary which allows users to easily change vendors

– Scalability – good SDS systems scale in both capacity and performance with any drive or server added to the system. And they keep all data in a software layer, which allows scaling or entire hardware refresh, without any downtime

– Low opportunity costs – SDS is designed to be simple and thus limits opportunity costs as innovations can be easily integrated. IT personnel are already familiar with x86 drive technology so adaptation to these systems is quick and requires less training

– Unified shared storage infrastructure – SDS solutions can run in “hyper-converged” set-up, i.e. on the compute/application servers themselves. You no longer need to invest time and money into single-purpose, specialized storage arrays.

In the same way, in which the hypervisor virtualized the bare metal server, Software Defined Storage is using a similar approach in order to create elastic and redundant shared storage pools. Although there will always be justifications for the traditional robust hard metal SAN, IT leaders are quickly learning the value of this storage transformation. It is now clear that the traditional SAN will be the next mainframe and the future belongs to Software-Defined Storage solutions for the many benefits they bring.

If you have any questions feel free to contact us at info@storpool.slm.dev

Share this Post