Storage is currently the most expensive and most complex piece in the data center and an integral part of any Cloud service – be it public, private or hybrid. Customers usually do not have enough information when buying from storage vendors and are often deceived by sales tactics and marketing propaganda. Terms like “software-defined storage”, “storage virtualization”, “server SAN” and many others claim to be the cure for any storage problem.

This blog post is intended for the business leader of any company in the process of buying data storage solutions. It gives practical advice on what to look for and what caveats to avoid.

The article also tries to explain in simple terms some of the jargon and practices in the storage industry. It is an attempt to demystify magic marketing statements and help the buyer to make a wise and educated choice.

What are the 5 Things Storage Vendors Are Not Telling You

1. You might get a huge discount.

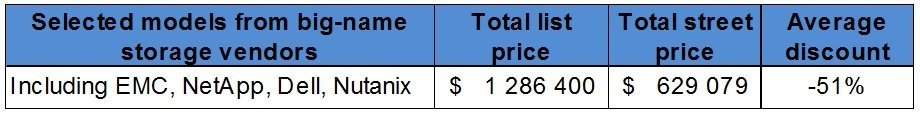

Traditional storage vendors do anything to get that initial contract. For a “first time deal”, customers might get 30-50% off the list price of a storage array. If you are a hard negotiator or a strategic account then discounts might go even in the 80% ranges. Have in mind that usually price reductions are made on the hardware only.

Source: StorPool competitive intelligence.

A legacy storage vendor (think two and three letter vendors) would rarely give you a discount on the support or the software packages. And it is the services and software that deliver vital functionality and turn a piece of iron into something that actually delivers fast and reliable storage. There might be a discount on support or storage software, but you can expect it to be much lower. Additionally, professional services that are always needed in order to design, evaluate and deploy a storage system are not included and may add a significant amount to the total bill.

Beware: Тhis is a tactic called “to get the foot in the door”.

2. …but there is no “free lunch”

Getting that storage array at a solid discount might initially sound like a good deal. But it rarely is – when renewal time comes or when you need to expand the storage solution… you will pay the price – expect no discount then. This is, of course, a form of vendor lock-in. You will always be dependent to some extent on your vendor or technology of choice. However, some vendors lock you in much more than others. It is much easier to change a storage solution that consists of software and hardware provided by two separate vendors. You can decouple and change them independently. And it is much easier to change a piece of standard hardware than a piece of specialized vendor-specific hardware.

Do not base your business case or ROI calculations on the initial discounts you get from your hardware storage vendor. They might give you a discount next time too, but it is not very likely. And even if they do – it will be much lower than the first time.

3. The Margin is built in the drive, not the box

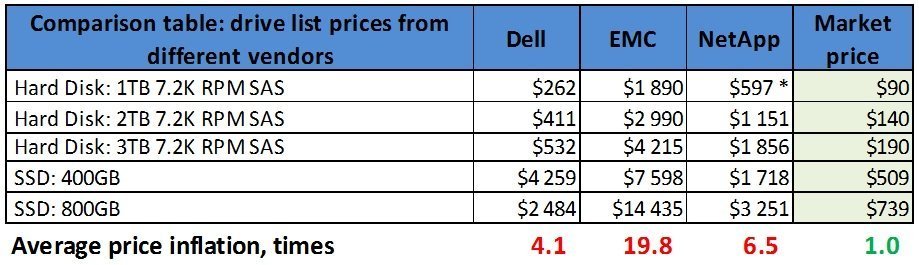

Hardware storage vendors use the well-known freebie or “razor and blades” pricing model. In it the margin is wrapped in the drive price, the chassis is not. So the big box comes at a similar cost but the drives you need are vendor-specific and typically cost 3 to 15+ (!) times more than basically the same or alternative drives bought from a standard vendor.

Sources: Dell: http://configure.us.dell.com/dellstore/config.aspx?oc=brct132&model_id=powervault-md3860i&c=us&l=en&s=bsd&cs=04; EMC: http://www.peppm.org/Products/emc/price.pdf & http://www.emc.com/sales/stateoffl/florida-price-list-2014-05.pdf; NetApp: http://www.peppm.org/Products/netapp/price.pdf; Market price: http://www.newegg.com & http://www.intel.com

* no list price for 1TB 7.2k NetApp drive. Estimated as an average of $/TB of the 2TB and 3TB drives. Prices as of 12 Nov 2014

Fact: There are only 3 companies in the world producing hard disks today, regardless of what the logo sticker on top of the drive says! These companies are Seagate, Toshiba and WD. And while the disks might come from 3 original vendors, the drives you buy from your storage vendor are with flashed firmware and cannot work in another array (or a standard server). Also, the storage box from this vendor would work only with the respective vendor’s drives, so you cannot buy the standard drive and put it in the storage array either. You have to get these 3-10x more expensive drives.

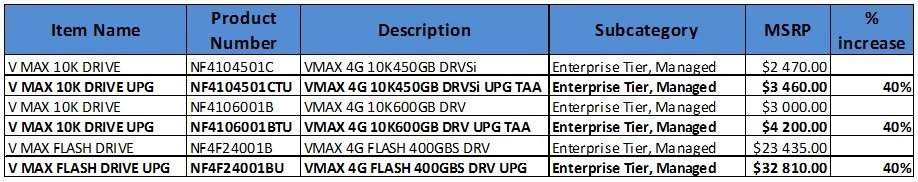

Besides, the price you pay for a drive when you buy it in the box is different from the price of an additional, upgraded drive. And you guessed it – the latter is much higher. Here is an example for you, taken from EMC. Notice upgrade drives (denoted with “UPG“) are exactly 40% more expensive:

Source: http://www.emc.com/sales/stateoffl/florida-price-list-2014-05.pdf

4. Price does not tell you enough anymore

Storage is already complex and is getting more and more complex. The new software features work magic. One can dramatically change the behavior of a storage system with every new technology and feature added. As with anything in life, there is a trade-off, though. It is getting tougher to estimate the actual behavior of a storage system.

The typical way of buying storage used to be straight forward: “give me a solution that can deliver 20 TB of raw capacity and 20,000 IOPS of performance”. Not anymore – with software features like thin provisioning, snapshots & clones, caching, tiering and so on, one can considerably change the output parameters of the storage system. Furthermore, the impact of each feature will depend on the customer’s use case – the type of their data, the pattern of their workload. It is getting progressively challenging to predict the impact of a feature on the actual results a storage system will deliver. Now you need to run a PoC (Proof of Concept) or a pre-deployment test just to get a good estimate of what your solution will actually deliver.

Example: 1 TB is not 1 TB any more – intelligent data reduction storage features such as snapshots, clones, thin provisioning, deduplication, in-flight compression will shrink the original 1 TB to… an unknown amount of space. This gain is use-case specific – depending on your particular data and its usage.

Another trend we notice is the marketing of big vendors that push particular buzzwords or a feature as a solution to every problem. Maybe the most advertised word we come across is “deduplication“. While “dedup” is a great feature, it has been over-emphasized mainly by “all-SSD” vendors as “The Solution for reducing the physical storage footprint of your data (i.e. raw to usable data)”. However, there are a number of other features that achieve the same goal, some of which have a much bigger impact – for example thin provisioning, snapshots, and compression.

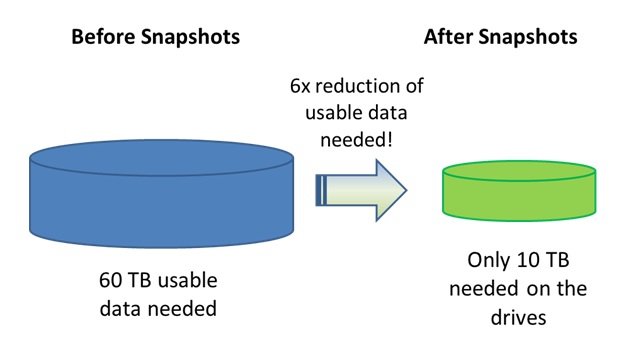

Here is a particular example from a customer in the hosting industry. The use case is a VPS service, running StorPool Storage:

Note: While similar results can also be achieved with deduplication, it uses significant amounts of CPU and RAM, while snapshots do not stress the system.

In resume: Educated customers should take decisions based on the actual benefit they get and they should not insist on a particular technology to deliver these results. As we stated above – the data and the use case have a significant impact on the technology that can deliver the most benefits. Customers do not need features, they need solutions to real business problems. It usually comes down to increase performance and capacity or bringing down the total cost of a solution. In many cases – all at the same time!

5. There is nothing special about that storage box

We see many customers who believe that a storage array is something “special”. This is not true. A storage box is just a regular server on the inside, with a very special cover design and a logo on the outside. And it is not an exceptionally powerful one, as well. Besides the custom box and design, inside the box you find all the components of a standard server – CPU, RAM, disk controllers, network interfaces and of course, drives. Nothing special.

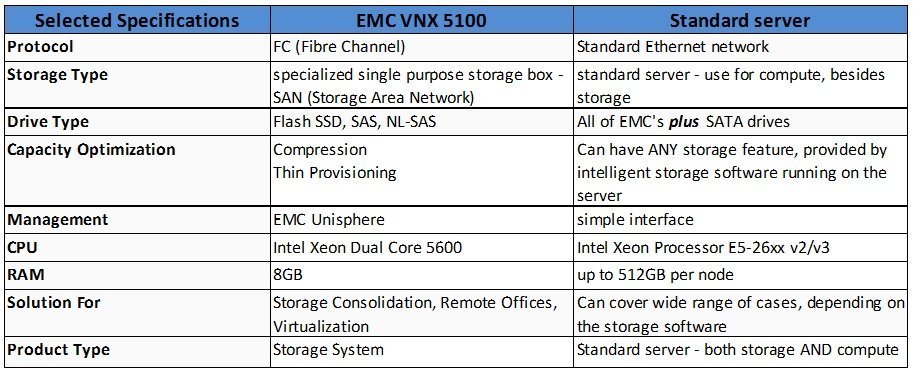

Example: resume of specifications of EMC VNX 5100 storage array vs. standard server:

The components in this box are well combined and tested together, but this is not something that is particularly special or hard to do. All software-defined storage vendors provide reference configurations or preselected commodity servers that can be used. These configurations can then be used as a standard building block of a scalable storage system.

If you have any questions feel free to contact us at info@storpool.slm.dev

Share this Post

3 Comments

I think I just got dumberer reading this story! Can you explain how “snapshots” turn 60TB into 10TB? How about a complete explanation on this one. Lets say I have a working set of 60TB (NOT 6 copies of a 10TB working set of data). What can snapshots do other than give me a point in time backup copy of that 60TB of data? There is a TON of value in compression and deduplication as in many (not all) cases it can shrink your data footprint ANY in some cases (like virtualization) can potentially help increase performance (when combined with other technologies like SSD caching). Can you build a storage server that can perform well and do many things that a specialty array can do? Yes. Are most IT users going to be able to fine-tune it to maximize that server? Probably not. Will that IT user have to spend a good portion of time managing the server he/she built? For sure. And when there are performance or technical problems you are on your own… good luck when you implemented this storage server for a business critical application that the company’s revenues depend on. Not that I’m an EMC fan either, but even with your example you are using a system that is often purchased for a business need, a OK performance system (maybe not the fastest), but one with high-availability features that has pretty good up-time even when there are critical firmware updates. Much easier to update/upgrade while application is still online than doing so with a storage server/server cluster. I also disagree with your comments about expansions not being discounted. I do sell many storage solutions and I honestly cannot think of one who does not discount future expansions. Maybe not to the same level as the initial purchase (percentage discount), but that has a lot to do with the initial purchase includes the bulk of the manufacturer’s intellectual property (OS, features…) that they do put a price tag on therefore has the ability to be discounted at a higher rate.

Overall I give this story a D- as it is very misleading (I don’t give it an F only because I’m not an EMC partner and I always like to see them on the short end of the stick).

Thank you for the interest in this article.

Let me address your comments one by one in a structured manner.

> Can you explain how “snapshots” turn 60TB into 10TB?

There might be a slight difference of terminology here. When we say “snapshots” we mean the combination of copy-on-write, snapshots and clones of base images. They are simply fused together in our minds. In some typical use-cases there are a lot of VMs that are based on the same base image containing the operating system and full software stack (e.g. Ubuntu/Apache/MySQL/Wordpress). In these use cases, we have seen in practice a 6:1 gain overall from thin provisioning and snapshots/clones.

Obviously, if you have 60 TB of unique data as you describe, then snapshots and clones won’t help you.

> Are most IT users going to be able to fine-tune it to maximize that server? Probably not.

I agree with you. The typical IT user is not capable of fine-tuning a software-defined storage system. And there are a lot of parameters which need to be controlled, not only in the operating system and software, but also in low level hardware settings. This is why our service includes tuning of StorPool for your particular environment and we’ve developed tools to perform this tuning quickly and reliably.

> Will that IT user have to spend a good portion of time managing the server he/she built? For sure.

I disagree. A storage system built on standard x86 hardware and good quality storage system software behaves in the same way as a storage appliance.

> And when there are performance or technical problems you are on your own… good luck when you implemented this storage server for a business critical application that the company’s revenues depend on.

You are not on your own. You have a technology provider there to help you. The same as if you buy an appliance. Well, maybe some software-defined storage vendors don’t perform as well in this respect. So pick your SDS vendor carefully.

> Much easier to update/upgrade while application is still online than doing so with a storage server/server cluster.

StorPool clusters in production are upgraded in-service and with no application impact. Just upgrade the servers in the cluster one by one on a rolling basis. We are of-course talking about our solution, since that is the one we are experts in.

> I also disagree with your comments about expansions not being discounted.

In our experience as a purchaser of IT technology, vendor-locked hardware has been used by vendors on us to negotiate a higher price per unit for an upgrade, than on the initial purchase. In a software-defined environment, this is not possible for the vendor to do. So yes, as we stated in the article, even when you get a discount, it is still a smaller discount, because of lock-in.

> Overall I give this story a D- as it is very misleading

We listen carefully to all feedback and opinions, so thank you for your honesty.

Well, most of the points in this blog state the obvious. Prospective storage customers will be given a “once-in-a-lifetime” discount upfront to do the deal. The “evils” of vendor lock-in are well understood, but some lock-ins may be necessary at some level. Storage vendors always charge more for their HDDs and SSDs than you can get them for elsewhere, but most people won’t bother doing that out of concerns for their warranty and support. The value of a storage system is now in the software, not in proprietary hardware/firmware combinations. The whole movement to software-defined storage has made the case for treating the hardware as a commodity, but some commodity hardware is better than others. It is good for storage customers to have choices for storage hardware from multiple suppliers. The ODM storage server market has proved that point. What may be a stronger influences on customer behavior is their comfort level with their incumbent storage vendor(s) and their unwillingness to take a chance with a new vendor. Finally, the incumbent enterprise storage vendors are not above using FUD to retain keep their customers because it costs them 10X as much to get a new customer to replace every existing customer that defects.